Lessons

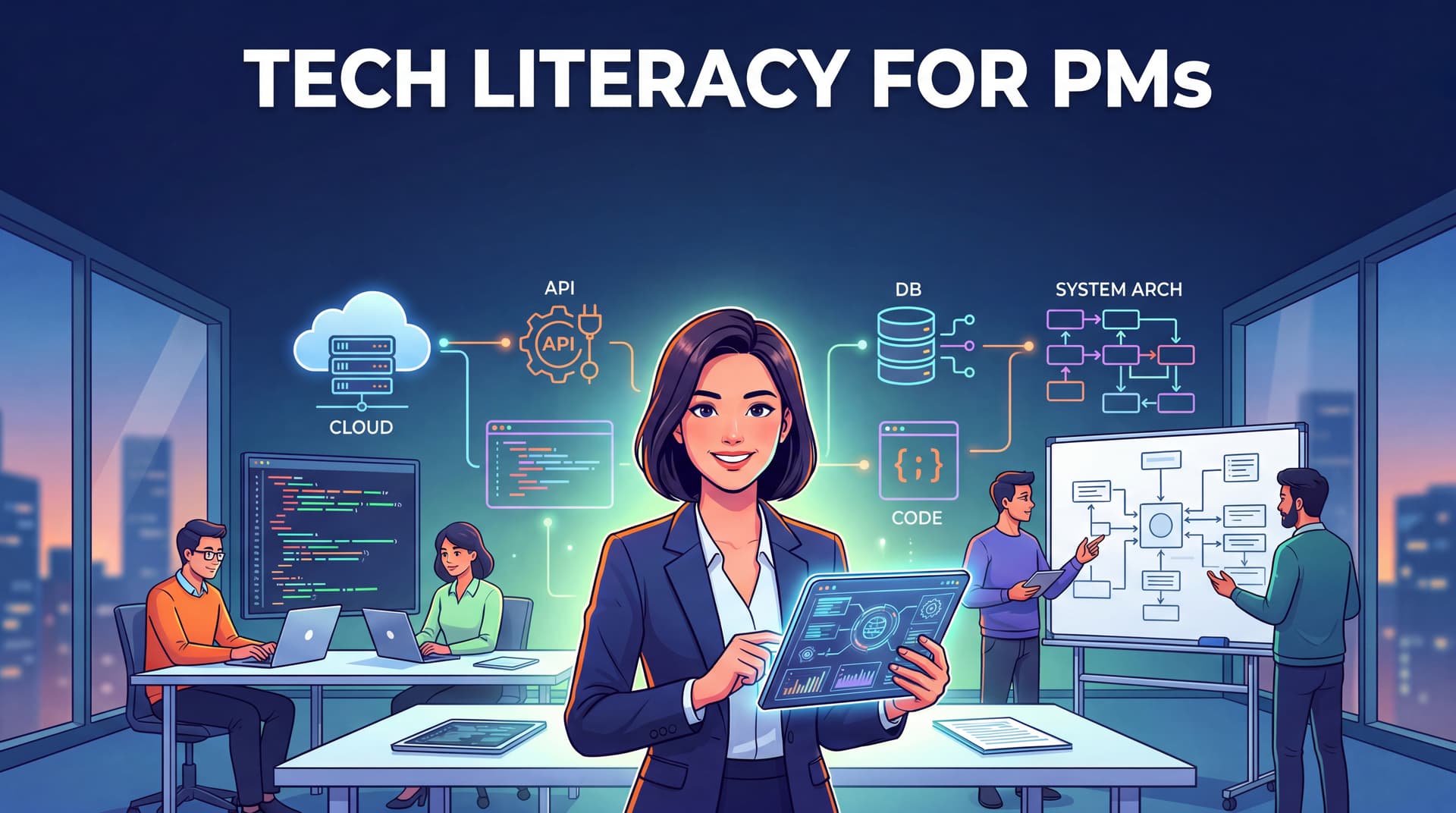

Buổi 01: Web Fundamentals — Từ URL đến Trình duyệt

Hiểu cách web hoạt động từ gốc rễ: Client-Server, HTTP, ba tầng frontend, API, và HTTP status codes — tất cả qua góc nhìn của PM.

Buổi 03: APIs in Depth — REST, Auth, và Rate Limiting

Đọc được API docs, hiểu cách auth hoạt động, và dùng Postman để test API mà không cần viết một dòng code.

Buổi 04: System Architecture — Monolith, Microservices, và Queue

Hiểu các quyết định kiến trúc ảnh hưởng đến tốc độ delivery thế nào, và đặt câu hỏi đúng khi architect đề xuất thay đổi hệ thống.

Buổi 05: Cloud & Deployment — Docker, CI/CD, và Release Planning

Hiểu pipeline từ code đến production, tại sao staging tồn tại, và cách lên kế hoạch release không bị chặn bởi DevOps.

Buổi 06: Security for PMs — Auth, OWASP, và Data Privacy

Hiểu các lỗ hổng bảo mật phổ biến mà PM vô tình tạo ra khi viết spec, và cách viết security requirements đúng.

Buổi 07: Performance & Scaling — Bottleneck, CDN, và Web Vitals

Đọc được Lighthouse report, xác định bottleneck đúng chỗ, và viết performance requirements có thể đo được.

Buổi 08: Working with Eng — Estimation, Technical Debt, và Incident Response

Hiểu tại sao estimate luôn sai, cách frame technical debt như product risk, và vai trò của PM trong incident response.

Buổi 09: AI for PMs — Hiểu AI để ra quyết định sản phẩm tốt hơn

Hiểu LLM hoạt động ra sao, khi nào nên dùng AI, build hay buy, và những rủi ro PM cần biết khi tích hợp AI vào sản phẩm.

Lesson 01: Why Annotation Matters

Understand why data annotation is the highest-leverage activity in applied ML, how the tool landscape has evolved, and where AnyLabeling fits.

Lesson 02: Installation & First Labels

Install AnyLabeling via pip, binary, or GPU-accelerated package, tour the interface, and annotate your first image in under five minutes.

Lesson 03: Manual Annotation Deep Dive

Master every annotation type in AnyLabeling — rectangles, polygons, circles, lines, points, and rotated bounding boxes — with the techniques that make manual labeling fast and precise.

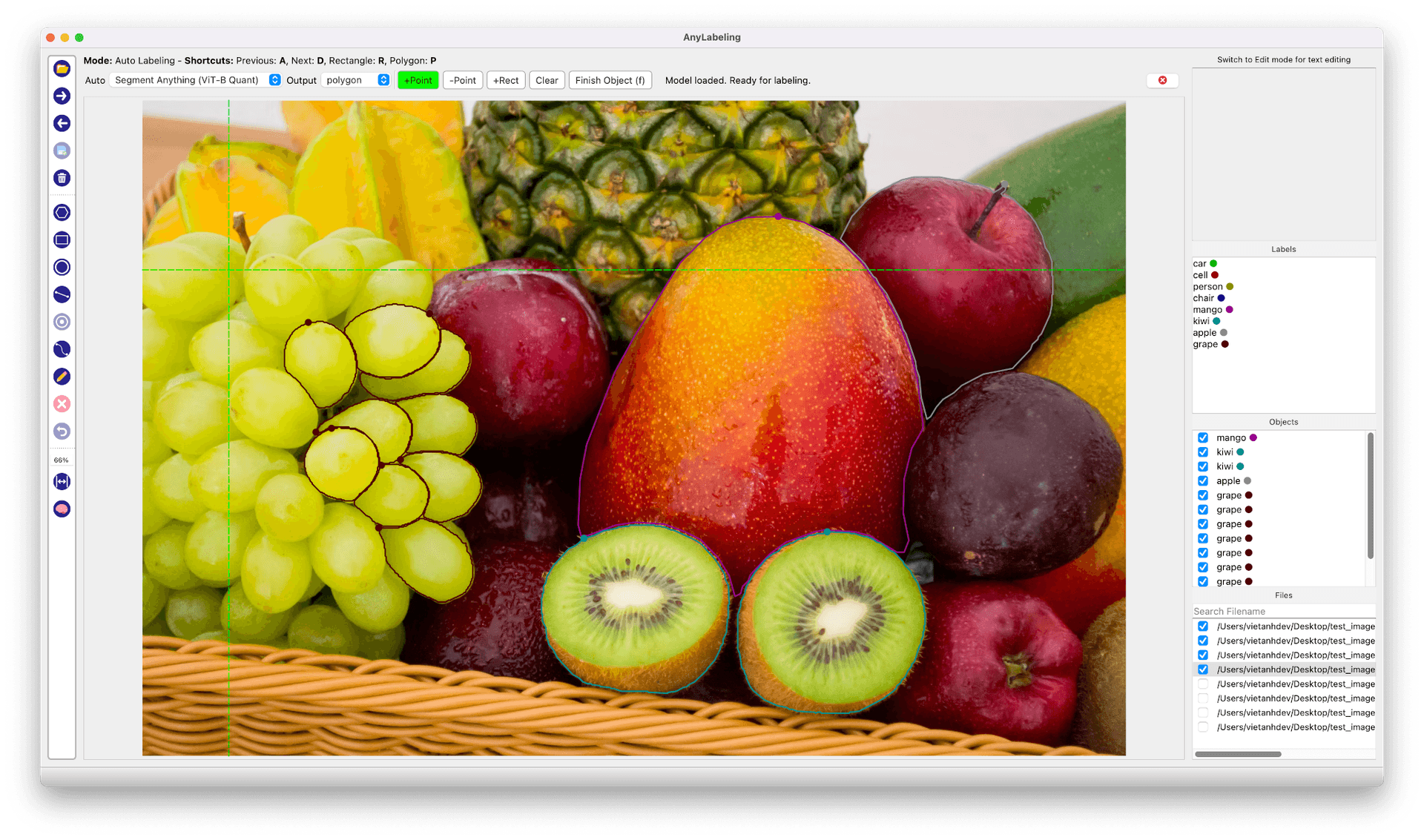

Lesson 04: SAM Auto-Labeling

Use Segment Anything models (SAM, SAM 2, SAM 2.1, SAM 3, MobileSAM) for one-click segmentation — point prompts, rectangle prompts, text prompts, and the workflow that makes it fast.

Lesson 05: YOLO Auto-Labeling

Use YOLOv5 and YOLOv8 models to auto-generate bounding boxes and segmentation masks, then review and correct the results for production-quality datasets.

Lesson 06: Text, OCR & Key Information Extraction

Annotate text in images — detection regions, transcription, and structured Key Information Extraction (KIE) for documents, receipts, and scene text.

Lesson 07: Export Formats & Pipelines

Export annotations to COCO, YOLO, Pascal VOC, and CreateML formats — understand when to use each one and build conversion scripts for your training pipeline.

Lesson 08: Custom Models for Auto-Labeling

Load your own ONNX models into AnyLabeling — convert from PyTorch or Ultralytics, write the config.yaml, and use domain-specific models as auto-labeling backends.

Lesson 09: Writing Annotation Guidelines

Write annotation guidelines that eliminate ambiguity, handle edge cases, and produce consistent labels across annotators — the most underrated skill in applied ML.

Lesson 10: Active Learning Pipelines

Build a label-train-relabel loop that improves both your model and your dataset with every iteration — confidence-based sampling, model-in-the-loop annotation, and knowing when to stop.

Lesson 11: Scaling Annotation for Teams

Scale annotation beyond a single person — multi-annotator workflows, quality assurance reviews, conflict resolution, and dataset versioning for production ML teams.

Lesson 01: What is DaisyKit?

Understand the motivation behind DaisyKit, its graph-based pipeline architecture, and how it compares to frameworks like MediaPipe and OpenCV.

Lesson 02: Installation & First Program

Install DaisyKit on Linux or Windows, understand the get_asset_file model registry, and run your first face detection flow on a static image.

Lesson 03: Face Detection & Landmarks

Run real-time face detection with YOLO Fastest and 68-point facial landmark regression with PFLD — including mask detection — using FaceDetectorFlow.

Lesson 04: Human Pose Estimation

Detect 17 body keypoints in real time using SSD-MobileNetV2 person detection and Google MoveNet Lightning — with HumanPoseMoveNetFlow.

Lesson 05: Background Matting

Replace video backgrounds in real time using AI portrait segmentation with BackgroundMattingFlow — no green screen required.

Lesson 06: Hand Pose Detection

Detect 21 3D hand keypoints per hand in real time using YOLOX hand detection and Google MediaPipe hand pose model — with HandPoseDetectorFlow.

Lesson 07: Object Detection with YOLOX

Detect 80 COCO object classes in real time using YOLOX via ObjectDetectorFlow — and learn how to filter classes and use custom-trained models.

Lesson 08: Barcode & QR Code Scanning

Scan QR codes, barcodes, and 2D codes in real time using BarcodeScannerFlow — powered by ZXing-CPP with no deep learning required.

Lesson 09: Mobile Deployment

Run all DaisyKit AI flows on Android and iOS devices using the prebuilt native SDKs — no server, no cloud, fully on-device inference.

Lesson 10: Custom Models & C++ SDK

Integrate custom NCNN models into DaisyKit flows, use the C++ graph API for concurrent pipelines, and build production-grade AI applications.

Lesson 07: Edge Detection

Learn how to detect edges in images using Sobel, Laplacian, and Canny edge detectors in OpenCV.

Lesson 08: Thresholding & Morphological Operations

Learn how to segment images with thresholding and refine shapes with morphological operations in OpenCV 4.x.

Lesson 09: Contours & Shape Analysis

Find, draw, and analyze contours to detect and measure shapes in images using OpenCV 4.x.

Lesson 10: Feature Detection & Matching

Detect keypoints and match features across images using SIFT, ORB, and FLANN in OpenCV 4.x.

Lesson 11: Video Processing & Optical Flow

Read, write, and process video streams. Track motion with background subtraction and optical flow in OpenCV 4.x.

Lesson 12: Deep Learning with the OpenCV DNN Module

Run YOLO26, ONNX, and other deep learning models for object detection and image classification — using the Ultralytics API and the OpenCV DNN module.

Lesson 13: Real-World Project — Real-Time Object Tracker

Build a complete real-time multi-object tracking application using YOLO26 detection and OpenCV trackers for persistent IDs.

Lesson 06: Image Filtering for Image Enhancement

Apply average, Gaussian, median, and bilateral blurring, then sharpen images with high-pass filters, unsharp masking, and the Laplacian operator.

Lesson 05: Color Spaces

Master RGB, HSV, Grayscale, and LAB color spaces — convert between them and use HSV masking for real-world color-based object detection.

Lesson 04: Basic Image Processing

Load, display, resize, rotate, crop, and flip images with OpenCV — using real downloadable test images.

Lesson 03: What is OpenCV?

Install OpenCV, run your first program, and understand the BGR color convention that underpins every OpenCV operation.

Lesson 02: What is Computer Vision?

Understand how machines perceive images, explore real-world CV applications, and see a live YOLO26 object detection example.

Lesson 01: Introduction to OpenCV Course

Course overview and outline — what you will build, what tools you need, and how the lessons are structured.